- What is the difference between traditional SEO and AI optimization

- How to perform technical optimization of a website for artificial intelligence

- How to optimize content for AI systems

- Off-Page GEO: from links to reputation

- Is it worth optimizing a website for artificial intelligence at all

In 2026, the number of clicks from search results to web resources continues to decline, AI Overview blocks appear in 48% of search queries, and people instantly receive answers in ChatGPT, Perplexity, Claude. Now, one has to work not only on SEO but also on GEO, AEO, AIO, and other types of internet project optimization. At the same time, the efforts pay off, as the traffic that still comes from links within AI responses converts into purchases 5 times more effectively than regular search traffic. Therefore, the right approach, namely the ability to optimize a website for AI search, can lead to impressive results that you might not have thought possible. Moreover, the key principles of website optimization remain unchanged; one just needs to learn how to work with the peculiarities of AI systems.

What is the difference between traditional SEO and AI optimization

In classic SEO, you need to optimize the website for ranking: you strive for your page to rank higher than others in the results list, thus increasing the chances that the user will click on it. In optimization for artificial intelligence, the focus is on citation: you want the AI to include your information, brand, and viewpoint in its response.

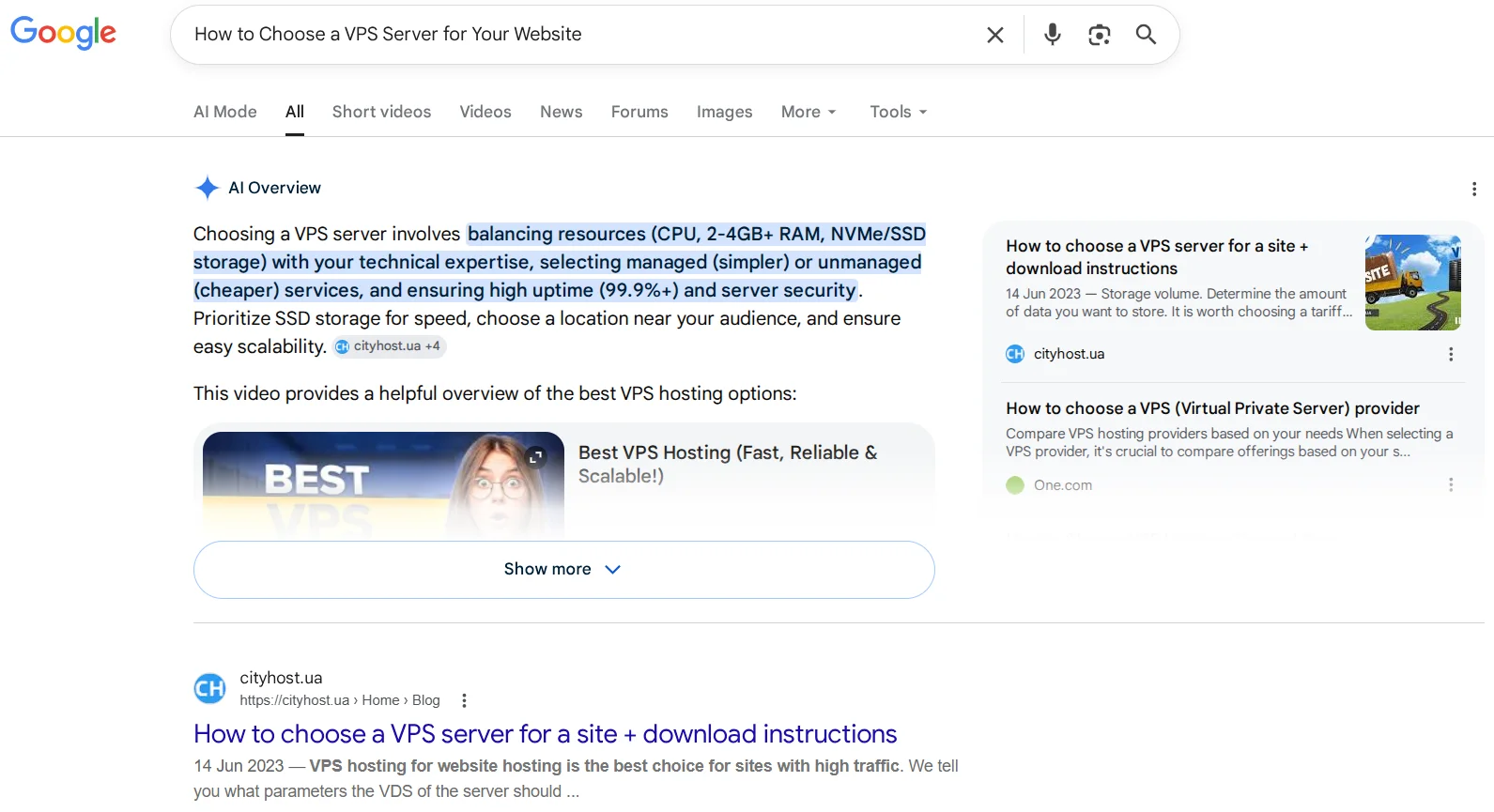

Example of TOP sources from AI Overviews

Different goals are determined by fundamental differences in the operation of AI bots and traditional search crawlers like Googlebot:

- Traditional search engines create an index — a library of addresses. When a user enters a query, bots search the index and return a list of addresses. The list and its order depend on the relevance of the text to the entered query and the authority of the source itself (with an emphasis on backlinks). That is, the crawler does not delve into studying your text — it classifies it.

- Some AI systems respond solely from a trained model, while others use RAG (Retrieval-Augmented Generation) for real-time responses. In the latter case, when a user enters a query, the AI performs a quick search, extracts text snippets from the most promising websites, and sends them to the model to generate a response. For website owners, both scenarios are important: to get into the training data and to be a source for RAG extraction.

| Characteristic | Traditional SEO | AI Optimization (GEO/AEO) |

|---|---|---|

| Main mechanism | Indexing and ranking of pages | Generation of responses based on RAG |

| Type of query | Set of keywords (e.g., «buy a car») | Conversational phrase (e.g., «what car is best for a family with 3 children?») |

| Main goal | High positions in the list of links to get clicks from search results | Getting into AI response (ChatGPT, Perplexity, Gemini, or AI Overviews) as a source or citation. |

| Key factors | Keywords, backlinks | Structured data (Schema), authority (E-E-A-T), relevance of context, and citation. |

| Content format | Articles optimized for specific search queries. | Clear facts, direct answers to questions, deep expertise, and live cases. |

An important point is not only the difference in the principle of operation of bots and the ultimate goal of the website owner but also in the places where answers are provided. In SEO, we optimize pages to get into search results (Google, Bing, Yahoo!), while when working with artificial intelligence, we need the web resource to be cited both in search results and in specific chatbots. Hence the great confusion in terms, namely — GEO, AIO, AEO.

Despite important differences, SEO and AI are gradually converging. The requirements of search bots are becoming increasingly similar to those of AI bots: clear answers to user queries, personal experience, deep understanding of the topic, structured data, links from authoritative sources, rather than just hundreds of backlinks from directories. For example, the Cityhost article on choosing a VPS server ranks first in Google results and second in AI Overview. In writing it, Bohdana Haivoronska and Andrii Zarovynskyi took key points from standard search engine optimization, added modern search engine requirements, and skillfully combined them with the peculiarities of artificial intelligence.

Read also: Artificial intelligence — friend, lover, psychologist

How to perform technical optimization of a website for artificial intelligence

For artificial intelligence, robots.txt, sitemap, page speed, code cleanliness, absence of duplicates, mobile adaptability, and high security level are as important as for search engines. With the development of AI, markup has become even more important, as its absence or incorrect configuration can become a major obstacle to citation by AI systems. In 2024, the llms.txt standard was also added, which helps AI obtain key information about the internet project.

- Basic technical optimization for artificial intelligence

- Special requirements for Robots.txt in 2026

- How to create an llms.txt file for artificial intelligence

- How to configure Schema.org microdata for AI systems

Basic technical optimization for artificial intelligence

Technical optimization for artificial intelligence almost completely coincides with classic SEO — and this is good news. If the website is technically sound from Google's perspective, it is already halfway ready for AI visibility. The difference lies in a few new requirements and a shift in priorities:

- Core Web Vitals. The loading time of the largest visible element LCP must be less than 2.5 seconds. The response time of the page to user action must not exceed 200 ms. The time window for layout shift during the loading of heavy elements can be a maximum of 1 second. The Cityhost blog has useful material on the main loading speed metrics that are important for both SEO and GEO.

- Sitemap. The main requirement remains — the presence of a sitemap.xml file in the root of the site registered in Google Search Console and Bing Webmaster Tools. However, Perplexity and some other AI systems use the sitemap for the initial crawl of the web resource before indexing, so the sitemap must be updated instantly when new content is published, and should not contain deleted or redirecting URLs. If the site is on WordPress, we recommend using Rank Math or Yoast, which are the best SEO plugins with all the necessary functionality for sitemap.xml.

- Security. The HTTPS protocol remains a critical ranking factor, thus, an SSL certificate is a mandatory condition for successful promotion in search and AI systems.

- Code cleanliness. Even here, AI has slightly raised the requirements, as many bots are unable to execute scripts. It is better to avoid complex client-side JavaScript (CSR) in favor of server-side rendering (SSR).

- Mobile adaptability. It has long been important to adapt the site for mobile devices, but it is not just about using the right templates or layouts. Check each page on different devices, as often the web resource is professionally adapted, but a specific page displays incorrectly on a smartphone or tablet due to specific blocks or interactive elements.

- Broken links. Regularly check the site using Screaming Frog (free for up to 500 URLs) or Ahrefs Webmaster Tools for the absence of internal links leading to non-existent pages. In 2026, the main types of duplicates are canonical and parameter duplicates, as well as category and tag duplicates, which are often found on WordPress.

- Redirects. Their use is normal practice, but without chains, where the URL leads through 2-3 intermediate addresses to the final one. There should always be only one redirect, meaning the old address immediately leads the user to the new one.

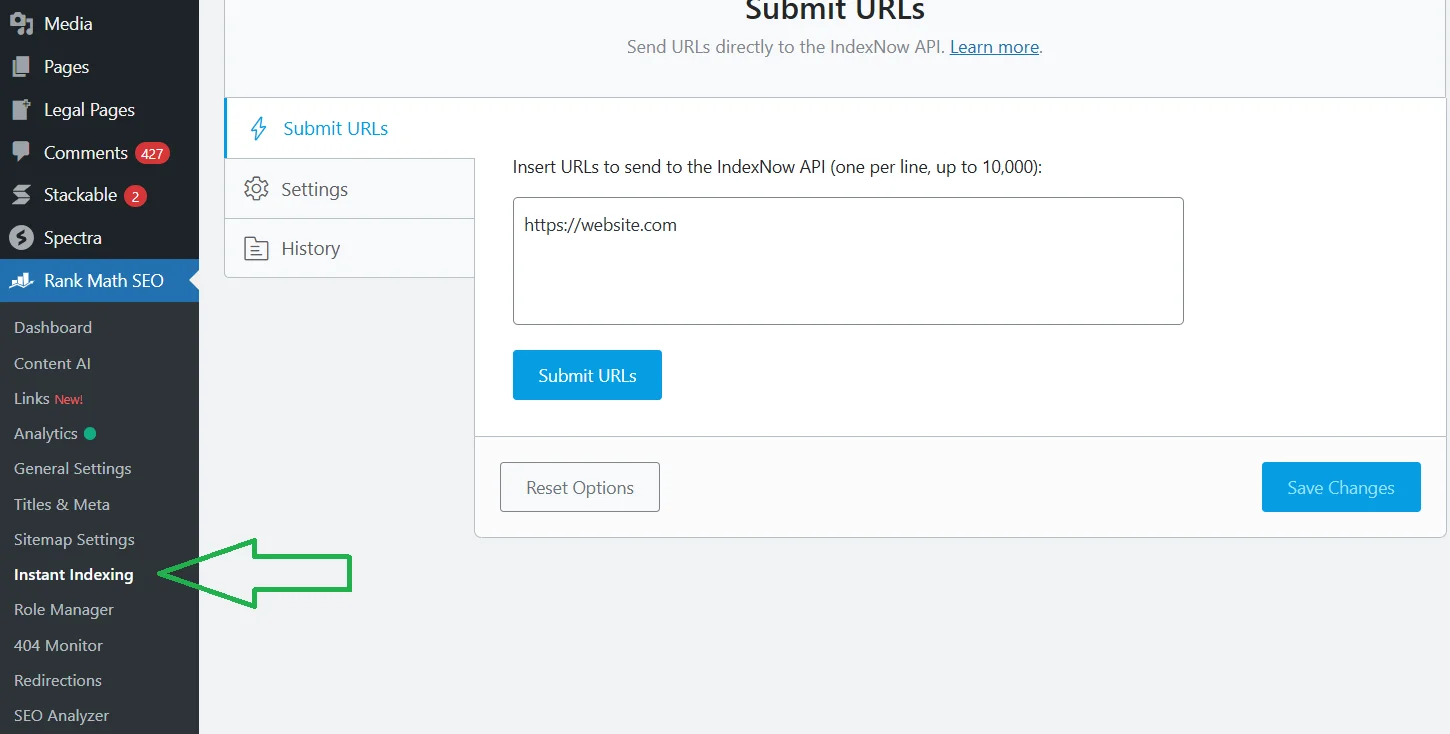

I want to highlight IndexNow — a standard for instant notification to both search and AI systems about content updates. For website owners on WordPress, there are comprehensive SEO plugins that support this protocol. For example, I use the free version of Rank Math, which supports instant indexing of both individual pages and large numbers together (up to 10,000).

In the Rank Math section → Instant Indexing, you first specify the list of URLs (each on a new line), then submit them for indexing and save changes.

Special requirements for Robots.txt in 2026

You can create a robots.txt file in a few minutes using online services and WordPress plugins like Rank Math, Yoast, All-in-One. But for optimizing a site for artificial intelligence, classic content is not enough. You need to be able to clearly distinguish between bots for training and bots for live search, so as not to accidentally block access to content for useful AI systems.

You need to check whether the following AI bots are allowed in robots.txt:

- Google-Extended — Gemini;

- ClaudeBot — Anthropic (Claude);

- PerplexityBot — Perplexity;

- ChatGPT-User and OAI-SearchBot — ChatGPT (specifically for users).

Many websites block the aforementioned bots by default due to security settings, for example, in Cloudflare. To grant them access, write in the robots.txt file the names of the bots using «Allow: /».

Some AI bots that collect data for training are better off being blocked:

- GPTBot;

- Google-CloudVertexBot;

- Bytespider;

- CCBot;

- meta-externalagent;

- Amazon-bot (if you do not sell products on Amazon and do not benefit from Alexa).

You can also block their access in the robots.txt file using «Disallow: /». It is important to understand that this file is only a recommendation: large corporations usually adhere to these rules, while Bytespider and similar bots ignore them. However, it is better to close access to them and additionally protect yourself, for example, with the same Cloudflare.

How to create an llms.txt file for artificial intelligence

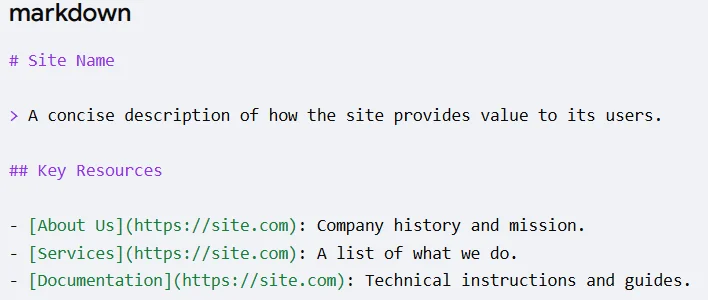

You can create this file in a standard text editor, such as Notepad or VS Code. The document should be named llms.txt, not LLMS.txt. It should include:

- # Project name — main header.

- > Short description — essence of the site.

- ## Sections.

Then save the file and place it in the root folder of the site, where the robots.txt is already located. You can immediately check it at yoursite.com/llms.txt. If the project is large, you can create llms-full.txt, where you can place much more links and detailed information, while keeping the main llms.txt as a concise introduction.

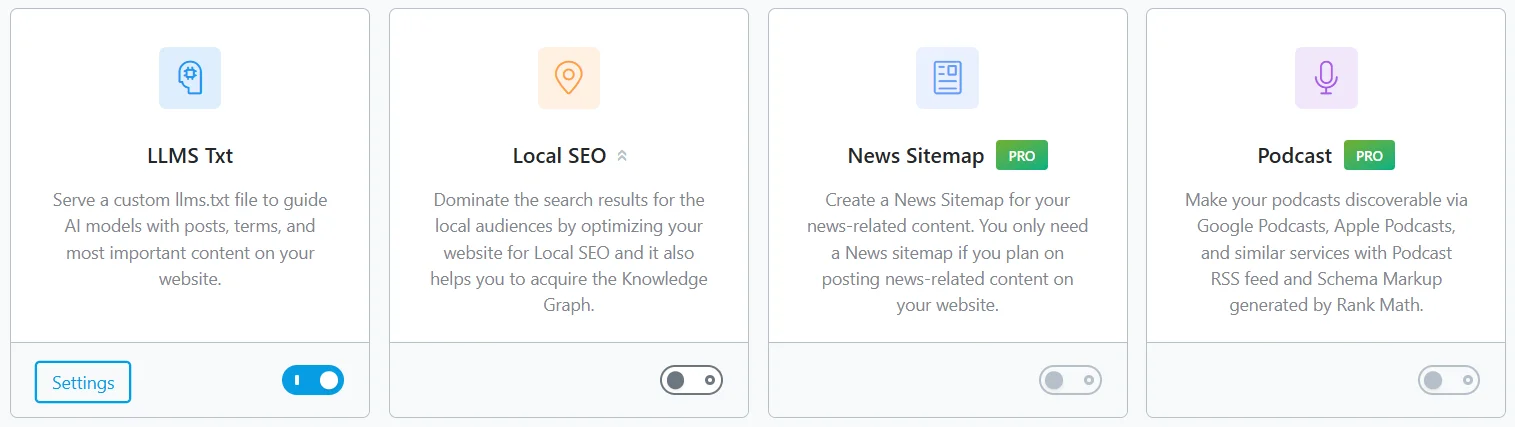

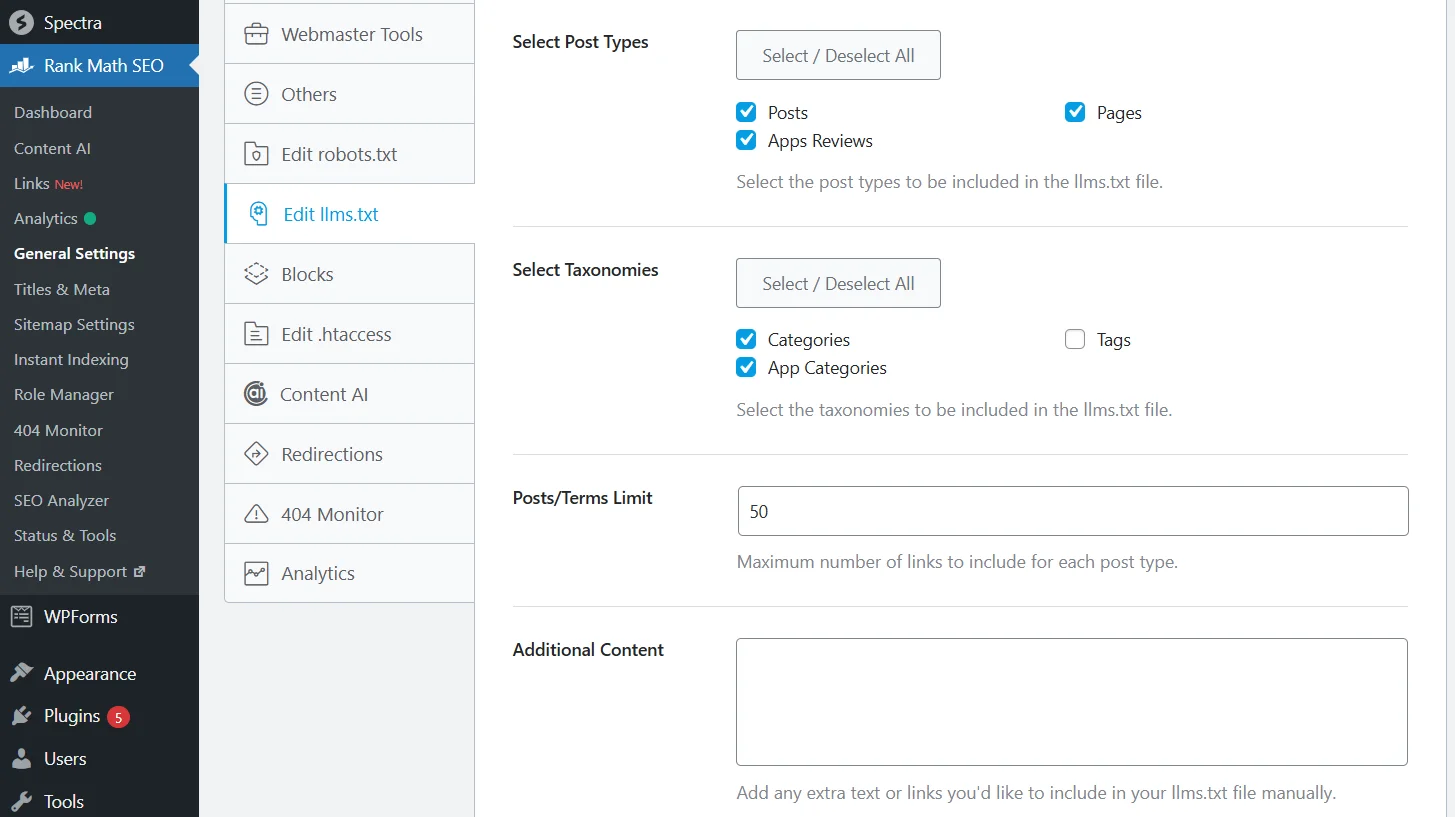

For WordPress, there are many plugins for automatically creating llms.txt, including LLMs.txt Generator and Website LLMs.txt. Moreover, classic SEO plugins for website optimization also support this file. I specifically use the module for Rank Math — LLMS Txt.

The module is available in the free version with instant activation.

LLMS Txt from Rank Math supports both standard and custom post types.

How to configure Schema.org microdata for AI systems

In classic SEO, Schema helped obtain rich snippets in the results to stand out among competitors. In the era of artificial intelligence, microdata has become even more important, as it allows AI to clearly understand the type of content.

The main types of Schema include:

- FAQPage — a direct source for generating AI responses, thus the best way to receive mentions and links from artificial intelligence responses.

- Organization — establishes brand identity, including logo, contacts, and social media of the company.

- Article — standard microdata for posts, which must include fields dateModified (AI pays special attention to the relevance of data) and author with a link to the expert's profile.

- Review — useful markup that I use for reviews.

- Product — extremely important for e-commerce, as it allows AI to extract data about price, availability, and reviews for product comparison.

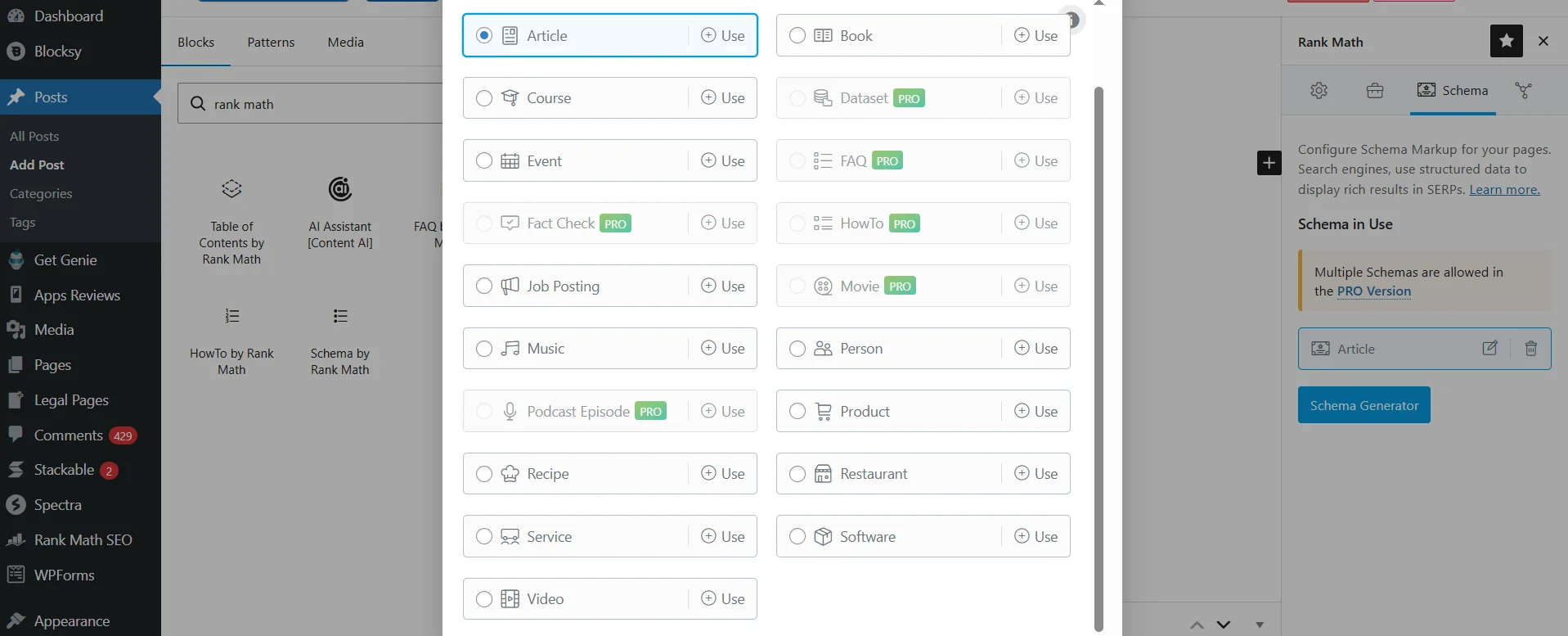

To generate microdata, you can use the official Schema.org Generator tool. If you want to simplify the process, then it is worth using one of the popular SEO plugins, such as Rank Math.

However, here you will face limitations of the free version: some schemas are only available in the paid version, plus technically it is not possible to add 2-3 or more markups. But, if you insert, for example, questions and answers through FAQ by Rank Math directly on the page, everything is set up and indexed. Just remember to check the results in the Rich Results Test to ensure that the data is read correctly.

Read also: The best AI services for SEO: optimizing the site without unnecessary effort

How to optimize content for AI systems

To create content that AI systems will cite, you need to shift from the thought «What keywords do people enter?» to «What questions do users ask — and what exact answer do they expect?». Your goal is to create an autonomous block of text that artificial intelligence can extract from the page and insert into the user's response without corrections.

To achieve this, you should use a modern content structure:

- H2 header in the form of a common user query. For example, «How to calculate the power of an air conditioner?».

- Direct answer immediately under the header, without long introductions. For example, «The area of the room should be divided by 10. Accordingly, if this is a 20 m² room, you need an air conditioner of 2 kW, 40 m² — 4 kW».

- Deep detailing, where you add unique data, personal cases, or professional nuances. For example, «For better accuracy, do not ignore additional heat sources. From my own experience, I can say that adding 0.1 kW for each person and 0.5 kW for equipment allows…».

Besides the structure of the blocks, it is important how the text itself looks. AI systems significantly more easily process and more often cite materials that contain paragraphs of 3-5 sentences, numbered and bulleted lists, tables.

Why personal experience has become a mandatory requirement

In 2023-2025, AI systems faced a massive influx of uniform content generated by AI itself. In response, models began to give a clear advantage to sources with signals of real human experience. That is why, despite the importance of specificity, especially in the first paragraph after the subheading, your site should not become a reference guide, as such web resources simply cannot compete with artificial intelligence.

On the contrary, personal experience is now critically important for both humans and AI. This is confirmed by the E-E-A-T concept, where the first «E» — Experience — appeared in 2022 as a response from Google to the rise of impersonal content. Artificial intelligence adopted this same principle of selecting reliable sources for citation: material without an author and without experience cannot be considered a reliable source.

You can showcase your experience and expertise on the site through:

- Author pages. It is no longer enough to simply put a name under the article. It is important for the author to have a separate page that indicates their specialization, experience, education, examples of real projects or publications.

- Author signatures. Each material should have an author block with a photo, name, short bio, and a link to this page. This applies not only to blogs or online magazines but also to companies: select several team members, create profiles for them, and publish on their behalf.

- First person. As I said, do not make your article your personal diary, but where appropriate — show your experience. For example, «I tested 5 hosting providers for a month and chose the best reliable cheap hosting for the site» or «This power bank has fallen dozens of times, and externally it looks like new».

- Publication and update dates. AI systems operating in real-time take into account the relevance of the source. An article that was updated 3 months ago will receive priority over material last updated in 2023.

Thus, content that AI cites is built at the intersection of three things: a clear structure for quick extraction of answers, real experience as a signal of source reliability, and a living authorial voice that people want to return to the site.

Read also: What is tone of voice — how a brand should speak to its audience

Off-Page GEO: from links to reputation

Over the past two years, significant changes have occurred in the approach to external optimization from both search engines and artificial intelligence. Previously, links served one function — transferring «weight» from one domain to another, and the algorithm counted the number and quality of these connections. In 2026, links remain important, but for AI, they are more of a proof of the source's reliability than a key criterion for its selection.

As a result, the well-known types of Off-Page SEO have become equally important for artificial intelligence:

- Brand mentions. When an authoritative publication writes «according to Cityhost» or «as noted by Cityhost content marketer Bohdana Haivoronska» — AI systems record this as a signal of brand recognition and reliability. One such mention without a link is more important than 10 weak backlinks.

- Citations on high-authority web resources. Backlinks from reputable publications with DA 70+ remain important, but their value has significantly increased. It is better to obtain a few such links than hundreds from directories.

- Author authority. In classic SEO, domain and author authority were essentially the same. In GEO — these are two different signals, and both are important. If your author is cited somewhere or writes articles under their name for other publications, then their content on your site can receive a great boost.

You can obtain mentions of the site on Reddit and Quora by participating in relevant discussions and answering thematic questions. An effective platform for gaining both linking weight and thematic citation is Medium: create an account and write articles on topics similar to your site, inserting links (better not to spam, one or two is enough) and mentioning your internet project or company. Although the Ukrainian community on Medium is constantly growing, competition is still low, especially compared to the English-speaking segment.

Is it worth optimizing a website for artificial intelligence at all

Yes, but do not make it something grandiose, while panicking that «SEO is dying». It has been dying since 2003 when Google introduced the Florida Update and since 2011 with the Panda Update — began the fight against duplicate and weak content. And in recent years, there have been many changes and new updates; artificial intelligence has indeed taken a large share of traffic, but at the same time brought higher quality.

According to Superprompt data for 2025, AI search converts much better than traditional organic search. For example, the conversion from Google averages 2.8%, while Perplexity — 12.4%, ChatGPT — 14.2%, and Claude — 16.8%. A user who comes to you through Perplexity or ChatGPT is five times more likely to take a targeted action, such as purchasing a product or using a service, than a person from Google.

However, interestingly, good classic SEO is still the best strategy for getting into AI responses: according to Ahrefs data for 2025, 76.1% of URLs cited in Google AI Overviews also rank in the TOP 10 Google for the same query. That is, the foundation in the form of website search optimization remains, and specific requirements of AI systems are added to it, which we have already discussed:

- The technical base has hardly changed. Indexing, speed, structure — all still critically important.

- Content has become more structured and complex — from keywords to intents and questions, from anonymous text to verified author experience.

- External SEO has shifted from «backlinks as increasing linking weight» to «backlinks as enhancing site authority».

The key principles (authority, structure, experience, technical accessibility) work across all platforms simultaneously, whether it is Google, ChatGPT, or Claude. They all solve the same task: to find a reliable source for answering a human query. If you are clear and useful to your target audience, and most importantly — ready to share your experience, you will be able to receive traffic from both search engines and AI systems.