How to find keywords for SEO without Ahrefs and other services

How to hire copywriters for long-term cooperation and avoid risks as much as possible

How to optimize JavaScript so that it is seen correctly by search bots

Google Updates in 2022: what they were and what they affected

In December, a large-scale SEO conference organized by the Collaborator platform took place. Everyone could participate for free and listen to 10 lectures from leading specialists in the field of SEO and link building. We attended these lectures and made a digest of the most interesting advice in our opinion, related to the promotion of sites in 2023.

How to rank higher in Google with internal links

When developing a link profile, we usually focus on getting external links from trusted donors. But this can be done with links from old pages of the site itself.

Ten internal links can replace one average link from another site, and 30-50 internal links will work for Google on par with one link from a domain with a high DR.

For promotion through internal links, old articles, news, help pages, promotions or corporate events are suitable - everything that is not currently involved in the SEO strategy. We find pages on the site that have a lot of external links or a good UR, and redirect from them to the page we are promoting. The main thing is that old pages contain content relevant to the topic and keywords of the page we want to raise. It is also important that the traffic to the old page no longer goes, or is very low.

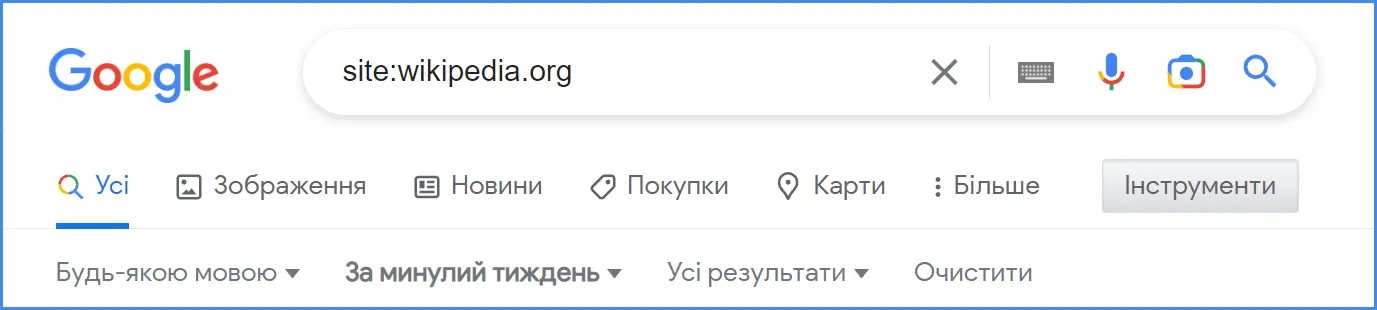

Internal donor pages can be found using the operator:

site:domain.com{keys}

It is worth remembering that not all links are taken into account by Google, namely those that meet the following criteria:

Google takes into account the visibility of links - placement at the beginning of the article or at the bottom, visual selection (color, underlining, frames, size of text or image for the link). If the link is made in small font and blends with the background, the search bot may not take it into account.

The position of the link relative to the page is important (header, footer, text, at the beginning of the text or at the bottom). Links in headers and at the beginning of text blocks work best.

Anchors should have content. DO NOT link with here, there, click, or similar anchors.

In the hierarchy of the site, the pages should be as close as possible to the main one, the search engine may not take into account links from sections placed deep in the hierarchical tree.

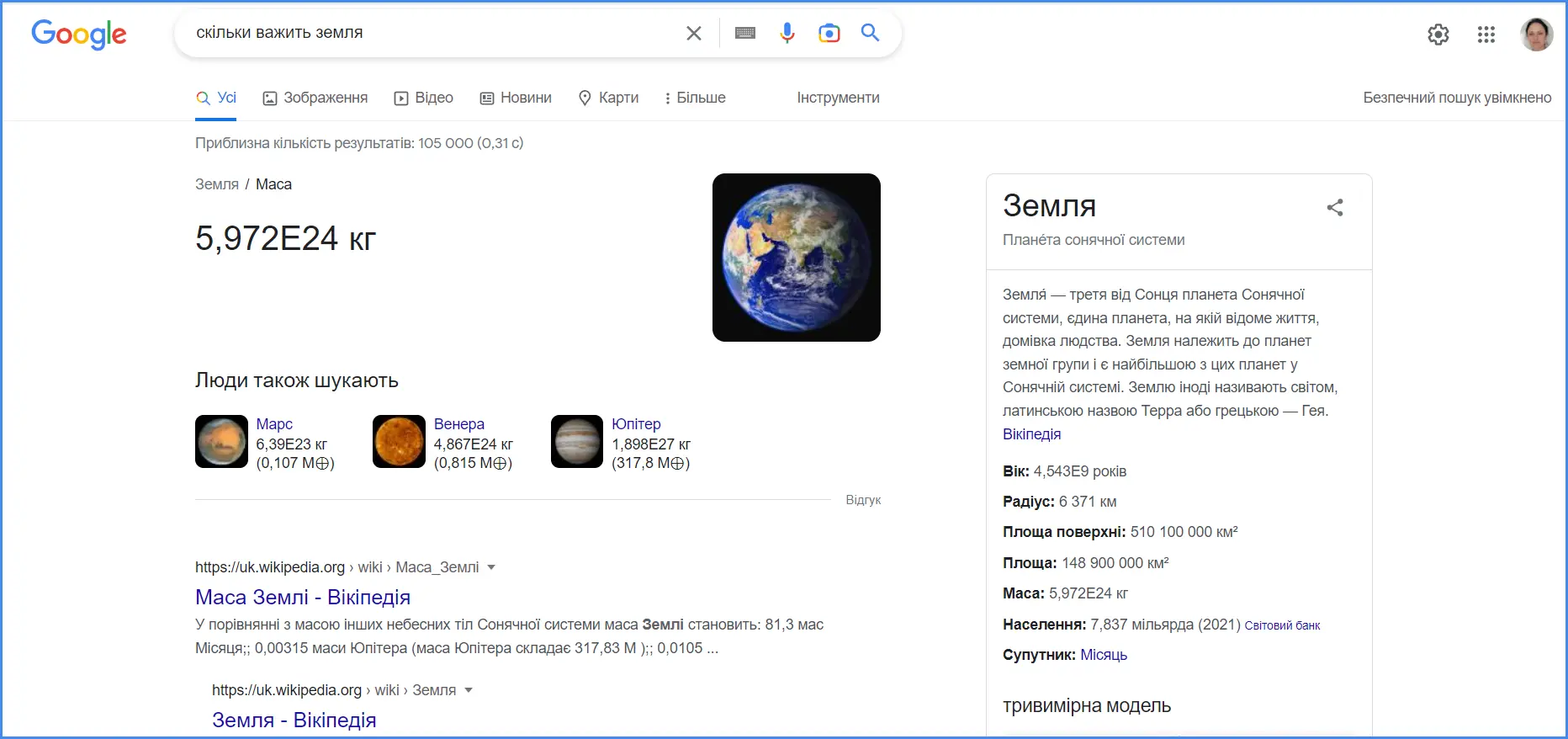

How to get into the knowledge graph and earn Google's trust

To begin with, let's remember what the knowledge graph is in Google and what it looks like. This is a section with a short answer to the user's request, which can be seen at the top of the issue.

To get into the knowledge graph, you need to increase the site's trust in Google. There are several methods for this.

Increase your reputation so that users search for your site name themselves through the address bar. For this, you need to work on the visibility of the brand. According to Google's logic, if users search for a site by brand name, then it is trusted offline.

Add to all encyclopedias, questionnaires, review sites and social networks. Google perceives positively the presentation of the brand on other global platforms. If you are mentioned on Wikipedia and reviews on review sites, if the brand has pages on most popular social networks, it means that the company is actively working on its promotion in various directions, has been on the market for some time and has a reputation.

But this should be done carefully. First, all the above-mentioned web resources should correspond to the topic of the site itself. Secondly, it is better not to add yourself to some platforms, because the article may be deleted. Such removal negatively affects the position of the site. For example, if we are talking about Wikipedia and Wikidata, it is better to ask an editor who has long been registered on these sites to add your company.

Read also: How many servers are in Wikipedia and other interesting facts about the world's largest dictionary

And one more tip: increase the quality and expertise of your content. This is important not only for the graph of knowledge, but also for the entire promotion of the site, because Google pays more and more attention to the usefulness and expertise of information.

What content to publish on the blog for better promotion

Continuing the previous topic, it is worth saying that most of the speakers at the conference emphasized that the quality of content is now very important for Google. Therefore, this section presents collective advice from several specialists at once.

If you have a blog that publishes articles, consider these trust indicators from Google:

Information about who wrote the article - author blog accounts, information about specialist consultants who helped in this, etc. Ideally, if the article is accompanied by a page about the author, on which, in addition to all the information, all his social networks will be indicated.

Reliable sources of information. List the sources you used while writing the article.

Allow users to show their attitude to the article - the site should have widgets for comments, likes, reposts in social networks.

Make high quality content. Very very. One high-quality and expert article is better than 2-3 materials without deep study of the issue and expertise.

Where to get such content? In both own and client cases. Personal experience of project development is the best basis for expert materials.

When writing an article based on a case, think about what problem of the reader it solves and what CU this content will bring to the page.

This approach will allow you to promote content without a lot of work on links. If the article really has valuable information, there is a high probability that trust donors will want to link to it themselves.

Who should write the content? If it's a Western audience, you need a native copywriter with experience in the niche they're writing about. If there is no experience, then it is worth involving a consultant, a specialist in the field. The same applies to content for your native audience: the author must have a deep understanding of the topic or work with a consultant. If a non-native speaker is writing for a Western audience, it is worth bringing in an editor with a fluent knowledge of the language.

How to find SEO keywords without Ahrefs and other tools

Ahrefs and similar tools paint a pretty rough picture of semantics, they're not perfect. But there are additional methods of collecting the semantic core that will help to expand the results, and will also be useful for beginners who do not have access to paid tools.

Collect Keys in Issue: Enter a basic industry key and see what keys Google highlights.

Search for keys on competitors' pages. Go to the TOP-10 sites published by industry key, familiarize yourself with the content and structure of the site. This will give insight into their SEO strategy. Analyze with the help of services, by which keys these sites are promoted.

The questions that users most often ask support are also keys.

Explore relevant offers for services from freelancers on exchanges.

This will allow you to focus not on frequency, but on conversion.

How to check donors for link building?

When looking for donors for outreach or buying links, it is important to carefully check their quality. In addition to the usual DR and UR according to Ahrefs, as well as the smooth growth dynamics of the link profile, we look at other criteria that will help determine whether the donor domain is suitable for cooperation.

First of all, it should be a live site on which articles are published and enter the Google index. To find out whether pages have been recently indexed in the search engine, enter site:domain of the site in the search bar and select the last period for which you want to see the results - a month or a week - in the tools.

We check the keys. There should not only be a lot of keys, but they should also grow. If the number of keys drops, then you should think about whether it is a good donor. It may have fallen under Google's filters.

Site traffic should be distributed evenly between all pages. If, analyzing the TOP-pages, you see that one of them collects 40% or 50%, then this is a donor with a risk. Imagine if this page falls out of the index for some reason - then the weight of the entire site will immediately drop by half. It may also indicate that the traffic to the page has been driven artificially. It is better when all the top pages bring a few percent.

Read also: Drop domains: how to buy a domain with a good history and why you need it

How to hire copywriters for long-term cooperation and avoid risks as much as possible

To promote the site, content is necessary, which means that the SEO department needs a staff of proven copywriters.

When looking for specialists for long-term cooperation, there is a risk of encountering so-called Ghostwriters, working with whom can bring a lot of headaches with minimal results. Such characters are found when a whole agency works under one name or when a copywriter takes orders and passes them to "literary slaves", paying them pennies for the work.

How to recognize such Ghostwriters:

Ghostwriters do not have social networks or put stock photos in the account;

Do not have a high-quality CV, but only a cover letter (or poorly written CV);

Do not include the camera for the interview;

They have a different style of articles, it seems as if they were written by 2-3 people;

After the trial period, the quality of the articles decreases.

When hiring real candidates, there are also several "bells" that indicate that it is better to refrain from further cooperation:

The candidate does not want to admit his mistakes;

Speaks badly of former bosses, left work due to conflict;

Can't explain why he left his previous job;

Too much or too little experience;

Does not ask questions;

Ready for any conditions, ready to work with any topics.

But remember that you don't need to transfer all the responsibility for the quality of the content to the copywriter. Much depends on the technical task. The task of an SEO specialist is to create a high-quality TOR that will serve as a framework and guide for a copywriter.

How to optimize JavaScript so that it is seen correctly by search bots

Let's talk about technical SEO and the improvements that can be made in the code to make the site readable by search bots.

If a search engine doesn't crawl JavaScript correctly, it doesn't see the site the way a human would.

What problems can occur during scanning:

The search engine may consider that the site is not adapted for mobile devices.

The design of the site can be perceived as outdated or unattractive.

If some elements appear only after interaction, the search engine does not see them, because it will not go through registration or click on buttons.

Content that takes more than 5 seconds to load may not be seen by the search engine.

What should be done so that the rendering works without delays?

Scripts should be worked out with prioritization. First of all, scripts for the first screen should be loaded, for this you can embed JS in HTML.

Primary scripts should reside on your server, and in no case on a third-party repository.

You can add async attributes to tags (asynchronous loading of scripts).

You can also delay the loading of JS by placing it at the end of an HTML block. Then the lighter markup code will load first.

You need to check how important pages for SEO are rendered and add them to the first loading - for example, those that lead to links.

For the best effect, you need to solve the issue with the rendering method that is suitable for your web resource. There are two of them:

CSR is client-side rendering. JS and CSS are contained in third-party files that are referenced from the HTML document. In this case, the bot scans the site in two steps: first, the HTML version, and if everything is fine with it, it goes to the links.

SSR is server rendering. JS and CSS are embedded in the HTML document, the site is completely generated before sending to the client. The disadvantage of such a solution is that the time to the first byte increases.

Which of these methods should I choose for a better interaction with search engine bots? The more JS elements you have on your site, the more suitable server-side rendering is for you. If your entire site is built on JS, client-side rendering is not for you. A mix of these two options is suitable for sites that have small JS elements that are not a priority for loading.

How to deal with duplicate pages on marketplaces

Marketplaces and other sites with many sellers (eg classifieds, real estate) often create duplicate pages.

For example, on a marketplace, an entrepreneur adds a product, and then another seller adds the same product. Due to this, there are duplicate links, in which the same name is written. How to deal with it?

Add the name of the seller (celery) to the title and description.

Add a description of celery to the description of the product itself, but no more than 30% of the text.

Encourage sellers/agents to create unique photos and product descriptions.

Run a script that will look for duplicate pages from the same seller and glue them together.

Read also: Checklist: how to open an online store for 1000 products

Google Updates in 2022: what they were and what they affected

In the year that is ending, Google presented many different updates, which are traditionally aimed at giving high positions in the ranking to sites created for the benefit of people. In addition, the search engine continues to update its own interface.

Page Experience Update (for desktops) - loading speed, convenience and security of the site have become even more important for users.

Product Reviews Update for sites that publish reviews of services and products. Google wants reviews to be more expert, fact-filled, written about a product that someone has personally used.

Spam Updates - Google continues to fight spam with AI that understands all languages.

Helpful Content Update, under which sites with little useful content fall. Google expects sites to provide useful, unique content that helps users solve their questions.

New Features in Google Shopping — for example, customer reviews in snippets and 3D models of products that are assembled from several product photos. All this is done so that the user buys products from Google, which competes with other giants such as Amazon when it comes to online shopping.

Infinite Scroll — infinite scrolling has been introduced in rendering. So far, it has been implemented only in the mobile version for the English-speaking sector, but it is likely that we will see it soon.

SEO trends in 2023

And finally, predictions for the next year for SEO specialists from Serhii Koksharov, who was closing the conference:

Although they have been talking about the death of SEO for several years, it is not going away. We continue to engage in classic optimization, but let's not forget to make useful content.

Reviews will become important for promotion. Most likely, SEOs will deal not only with links, but also with reviews.

There will be more content created by artificial intelligence and tools capable of analyzing it. Perhaps in the future, everything an SEO specialist does will be generated and implemented by a program on the site.

Not only optimization for search platforms, but also promotion on other global platforms, such as Amazon, YouTube, Twitter and others, will become more relevant. After all, search engines are not the entry point to the Internet for all users.

Google will increasingly search for content within content — certain paragraphs in text, elements in images, timecodes in video.

The behavioral factor will be decisive for issuance. Google will increasingly value sites where users stay for a long time, rather than returning to the search page again.

***

The tips are taken from the lectures of experts in the field of SEO, link building and content creation, who spoke at the conference:

Artem Pylypets, Head of Promotion Department at SEO7

Olesya Korobka, Independent expert

Andriy Tymoshenko, Head of SEO Department at SeoProfy

Nina Romaschenko, SEO Content Team Lead at Genesis

Yana Shulga, Head of Linkbuilding department at Boosta

Anton Daliba, Team Leader of the SEO team at Livepage

Serhii Koksharov, SEO blogger at Devaka.info

The article was created from the materials of the Collaborator conference , which took place on December 9, 2022.