Very often, search bots scan the site quite aggressively and thereby create an increased load. In order to stop indexing, you need to write the following rule in the robots.txt file:

User-agent: *

Disallow: /

This rule completely excludes site crawling by bots. If you completely restrict access to the site for crawling, the site may disappear from search engine results.

1. Therefore, you can restrict access only to specific folders, links, files, and extensions using the Disallow directive.

Examples:

User-agent: *

Disallow: / directory

User-agent: *

Disallow: /privatinfo.php

User-agent: *

Disallow: /privatpic.jpg

User-agent: *

Disallow: / * jpg $

More detailed information on working with the file can be found in the Google instructions .

2. You can also limit access through the file. htaccess to certain pages of the site as described in the instructions .

To limit access from a specific IP address, it is enough to write a rule:

Order Allow, Deny

Allow from all

Deny from ***. ***. ***. ***

***. ***. ***. *** - replace with IP address. You can find out the IP address by opening the FTP server logs in the logs folder.

3. To block access to all except Ukrainian IPs, you can use the instructions .

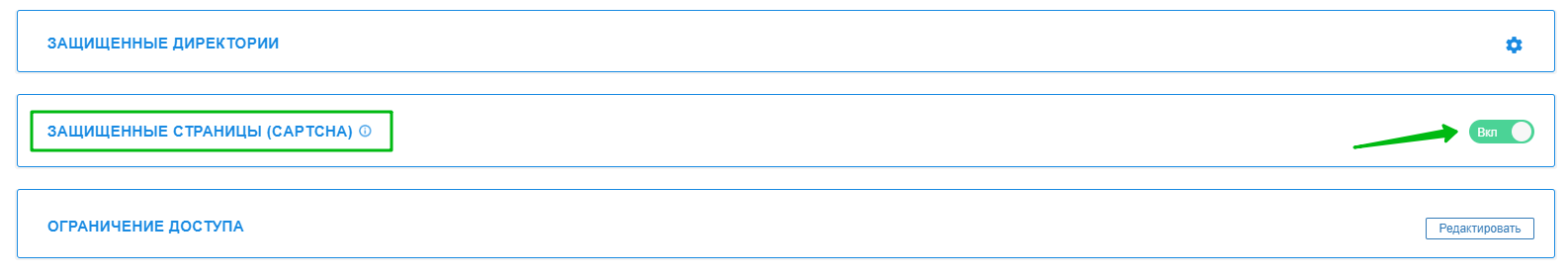

4. You can close bot access to vulnerable pages through an internal captcha, which can be found in the section Hosting 2.0 - Sites - Security - PROTECTED PAGES (CAPTCHA) *:

* Captcha is enabled by default for the following pages:

WP LOGIN PAGE : wp-admin, wp-login.php

JOOMLA ADMIN PAGE: /administrator, view=login

JOOMLA REGISTER PAGE : view=registration

OC ADMIN PAGE : /admin

MODX ADMIN PAGE: /manager

PRESTA SHOP ADMIN PAGE: /Backoffice

DRUPAL ADMIN PAGE : /user/login/

More details about robots.txt are described in our blog - https://cityhost.ua/blog/chto-takoe-robots-txt-kak-nastroit-robots-txt-dlya-wordpress.html

All question categories